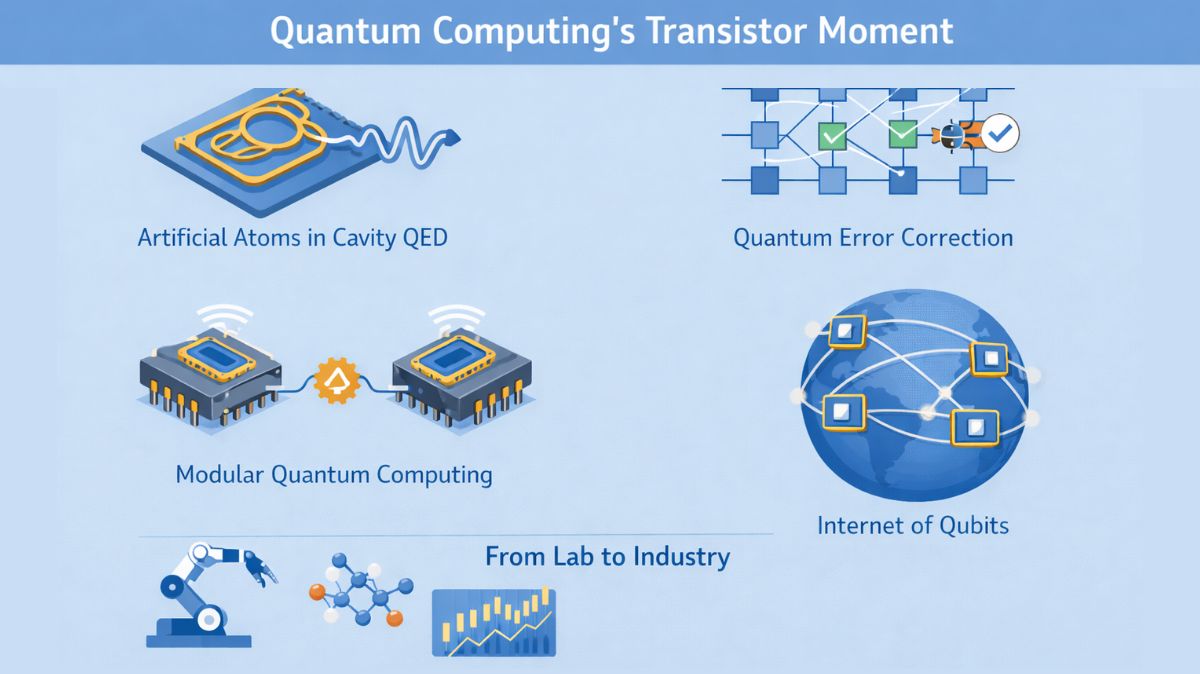

In order to greatly improve the performance of quantum computers, researchers have developed a revolutionary scheme for quantum error correction (QEC) called adaptive syndrome extraction. The recurring problem of high error rates and significant overhead in existing QEC circuits which frequently cause more errors than they fix is addressed by this novel protocol.

In order to overcome the intrinsic fragility of quantum information, quantum error correction is critically necessary. However, through malfunctioning gates, idling faults, and erroneous fixes, the process of accurately probing the system to identify and fix errors can produce extra noise. This frequently results in the paradoxical scenario whe re error-corrected logical qubits have shorter coherence periods than unencoded physical qubits. Implementing non-local quantum low-density parity-check (qLDPC) codes on hardware limited to local gates makes the problem even more difficult.

You can also read Model Based Optimization For Superconducting Qubit

These problems are lessened by adaptive syndrome extraction, which makes judgements in real time as the syndrome is being extracted. The methodology cleverly “short-circuits” syndrome extraction by testing only the stabiliser generators that are likely to yield valuable syndrome information, rather than carrying out all available measurements. Based on measurement findings from the current QEC cycle, this choice is made dynamically.

How the Adaptive Scheme Operates:

Concatenated code structures are commonly used to implement the protocol.

- Physical qubits are initially encoded by an inner, tiny error-detecting code, like the Iceberg code.

- For an outer, high-rate, high-distance qLDPC code, like a hypergraph product (HGP) code, the logical qubits from several of these inner code blocks subsequently function as the physical qubits.

Throughout each error-correction cycle, the adaptive system functions in two crucial phases:

- Initial Measurement:The inner Iceberg code blocks’ stabiliser generators are measured initially. Which blocks include errors is indicated by the resulting symptom.

- Adaptive Measurement: 00Additional syndromes must be extracted from the outer concatenated code because error-detecting codes by themselves do not offer sufficient information for complete correction. Importantly, only the outer code stabiliser generators that support qubits in the error-detected Iceberg blocks are measured. It is believed that generators without overlapping support will produce a simple (+1) measurement result, omitting pointless measurements. The overall number of generators tested is greatly decreased by this link between inner and outer coding syndromes, especially in the low error rate regime.

You can also read Coupled Cluster, DFT: Accuracy Cost Paradox In Drug Design

Important Benefits of Extracting Adaptive Syndrome: Simulations at the circuit level have shown that this adaptive method has a number of strong benefits.

- Lower Logical Error Rates: Especially in the critical low error rate regime, the adaptive scheme achieves logical error rates that are more than an order of magnitude lower than those of non-concatenated and non-adaptive codes. For instance, even at present experimental physical error rates, the adaptive method demonstrated an order of magnitude improvement in simulations employing La-cross codes.

- Resource Efficiency: It employs fewer physical qubits and fewer CNOT gates. Non-concatenated La-cross codes needed roughly five times more CNOT gates and more than twice as many physical qubits to achieve the same performance as the adaptive system.

- Faster QEC Cycles: On some quantum computing designs, this decrease in gate count might result in shorter QEC cycle periods, which helps to prevent the buildup of idle errors. The reduced CNOT count is essential for systems with limited parallelisation, like trapped-ion and neutral-atom quantum computers, even though the circuit depth may not necessarily be lower for concatenated codes (because of additional layers from the adaptive scheme).

- Quantum Weight Reduction:In the context of space-time, the protocol can be understood as a quantum weight reduction technique. Each physical qubit in the concatenated code is engaged in fewer checks “on average” with adaptively assessing outer code generators. Additionally, this lowers the average weight of checks, which lessens the depth of the syndrome extraction circuit and limits the spread of errors. The average check weight and qubit degree are close to ideal values for low mistake rates, even surpassing prominent topological codes such as the surface code.

- Fault-Tolerant Universal Logical Computation: Without increasing the space-time cost associated with non-concatenated HGP codes, the researchers have demonstrated that this adaptive system allows for fault-tolerant universal logical computation with []-concatenated HGP codes. Existing Grid Pauli Product Measurement (GPPM) and single-shot state preparation approaches are modified to do this.

- Simulation and Decoding Insights: The efficacy of the method was measured by memory tests that replicated repetitive syndrome extraction using a circuit-level noise model. Two steps make up the decoding process: first, a fixed correction is applied to the inner code blocks to address mistakes, transforming physical errors into logical errors for the outer HGP code. These logical mistakes are then processed for the outer code by a classical decoder.

You can also read Quantum Multi Wavelength Holography Approach to Imaging

At first, there was no single-shot performance (where the logical error rate per round stabilises over time) in the concatenated codes with adaptive syndrome extraction. By measuring the whole set of generators every few rounds (a process known as “unmasking”), single-shot behaviour was restored.

In comparison to non-concatenated HGP codes, the adaptive system with quantum expander codes demonstrated a lower pseudothreshold and performed worse at very high error rates; nonetheless, its notable gain in performance at low error rates makes it extremely promising.

Potential Applications and Future Directions:

In particular, the adaptive syndrome extraction approach works well for:

- 2D-Local Implementations of Nonlocal Codes: It might make it easier to apply nonlocal qLDPC codes to superconducting qubits and other architectures with few local gates. A certain level of locality is guaranteed by the inner Iceberg code by default, and its adaptive nature eliminates the need for costly nonlocal checks at low error rates.

- Modular QPU Architectures: This approach fits in nicely with ideas for modular quantum processing units (QPUs), in which separate modules are connected (for example, using photonic interconnects). Since error correction is first tried within individual modules, logical performance can be enhanced and computation speed up by reducing the use of slower and noisy inter-module links.

The development of large-scale quantum computers may be accelerated by this study, which represents a move towards dynamically constructed quantum error correction and real-time decision-making algorithms to lower computational overheads. To further maximize performance and logical gate capabilities, future studies might investigate the use of alternate inner and outer codes, more concatenation layers, or alternative concatenation algorithms. Beyond this particular code architecture, the basic idea that is, identifying mistake locations at a low cost to initiate more costly correction remains broadly applicable.

You can also read Quantum Portfolio Optimizer: Global Data Quantum, IBM Qiskit

Thank you for your Interest in Quantum Computer. Please Reply