HPC and Quantum Computing

Quantinuum and Japan’s RIKEN research organization have successfully completed the world’s first complete scientific workflow integrated across a top supercomputer and a trapped-ion quantum computer, marking a significant advancement for the scientific community worldwide. This accomplishment ushers in a new era for computational biology and materials research by definitively moving from the building of theoretical infrastructure to the real-world application of hybrid quantum–high-performance computing (HPC).

You can also read Telecom-Compatible Quantum Nodes via Erbium-Doped EuCl3

Overcoming the Classical-Quantum Gap

Japan has long been at the forefront of both classical and quantum investing, but the problem still stands: how to get from creating cutting-edge technology to proving its useful, integrated application? The renowned Fugaku supercomputer and Quantinuum’s Reimei trapped-ion quantum computer were integrated as part of a nationwide initiative commissioned by the New Energy and Industrial Technology Development Organization (NEDO) to overcome this obstacle.

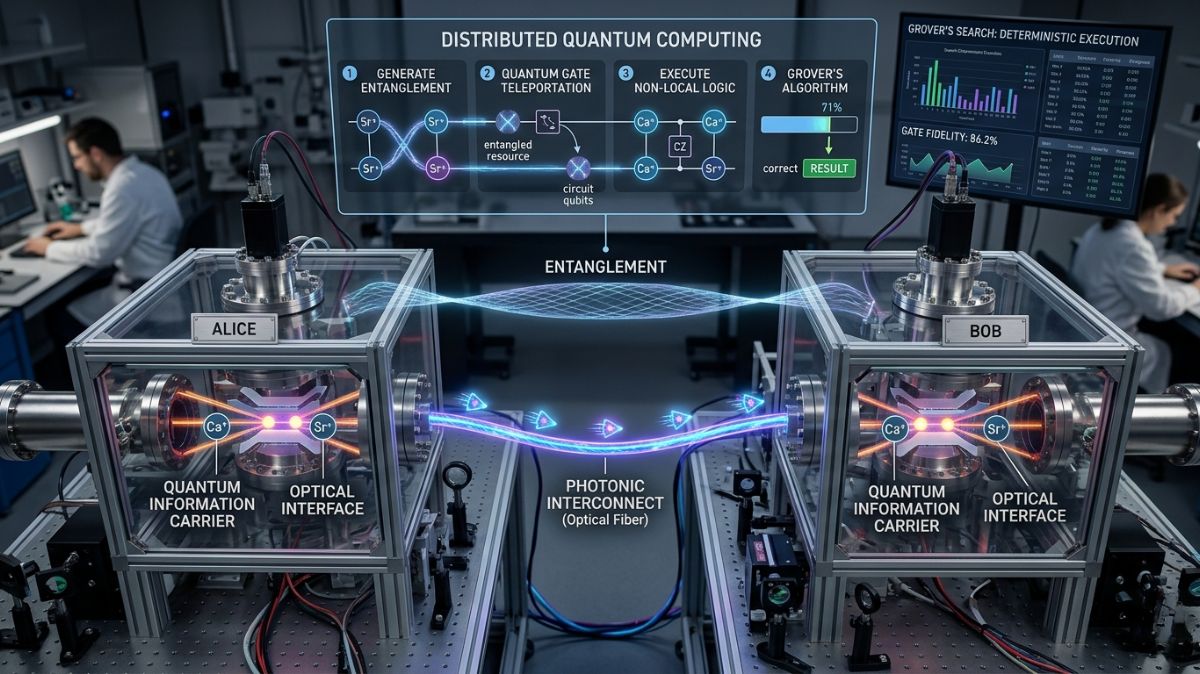

To tackle one of biochemistry’s most difficult problems, simulating chemical events within big macromolecules like proteins, the team used a layered computational framework called ONIOM. Due to delicate electrical effects, a particular “active site” where reactions take place in these systems needs to be quite precise, while the surrounding environment can be handled with less care.

The Fugaku supercomputer performs the baseline electronic structure computations and geometry optimization in this new hybrid paradigm. Reimei is entrusted with improving the handling of the most intricate electrical interactions at the active site, which usually defy standard classical approximation techniques. Tierkreis, Quantinuum’s unique workflow technology, coordinates the entire process and ensures smooth job scheduling and data transfer across diverse architectures.

You can also read Microwave Quantum Network Resilience at 4 Kelvin

The Hive: AI-Powered Algorithm Analysis

Hardware integration gives the “muscle,” but software advancements in parallel offer the “intellect.” Hiverge and Quantinuum have teamed together to use the Hive, an AI platform, for automated algorithm discovery.

The paradoxical nature of entanglement and interference makes it infamously difficult for academics to design quantum algorithms. The Hive develops basic algorithmic sketches into highly optimized, “noise-aware” quantum algorithms using Large Language Models (LLMs).

Hive-ADAPT, a variational quantum algorithm developed as a result of this cooperation, has shown that it can achieve chemical accuracy for molecular ground state energies while using orders of magnitude less quantum resources than the state-of-the-art techniques. Quantinuum’s quantum chemistry specialist, Dr. David Zsolt Manrique, observed that the AI came to a consensus on concepts at the domain-expert level, such as determining the “MP2” perturbative approach to direct initial circuit parameters, a process that often requires time-consuming manual fine-tuning by humans.

Strategic Power: The NVIDIA Collaboration

Further supporting the drive for hybrid utility is Quantinuum’s close partnership with NVIDIA. The NVIDIA Grace Blackwell platform is now being used in conjunction with the newest hardware generation, Helios, to target markets in advanced AI research and drug development.

The use of NVIDIA NVQLink, an open architecture that enables real-time decoding for quantum error correction (QEC), is a significant technological feature of this collaboration. An NVIDIA GPU-based decoder included into the Helios control engine increased logical fidelity by over 3% in a demonstration that was first in the industry.

Furthermore, a 234x speedup in the generation of training data for complicated pharmaceutical compounds like imipramine has been accomplished by the combined creation of the ADAPT-GQE framework, a transformer-based Generative Quantum AI technique. Developers may now combine quantum and GPU-accelerated classical computations in a single, cohesive workflow by utilizing NVIDIA CUDA-Q.

The Path to Fault Tolerance

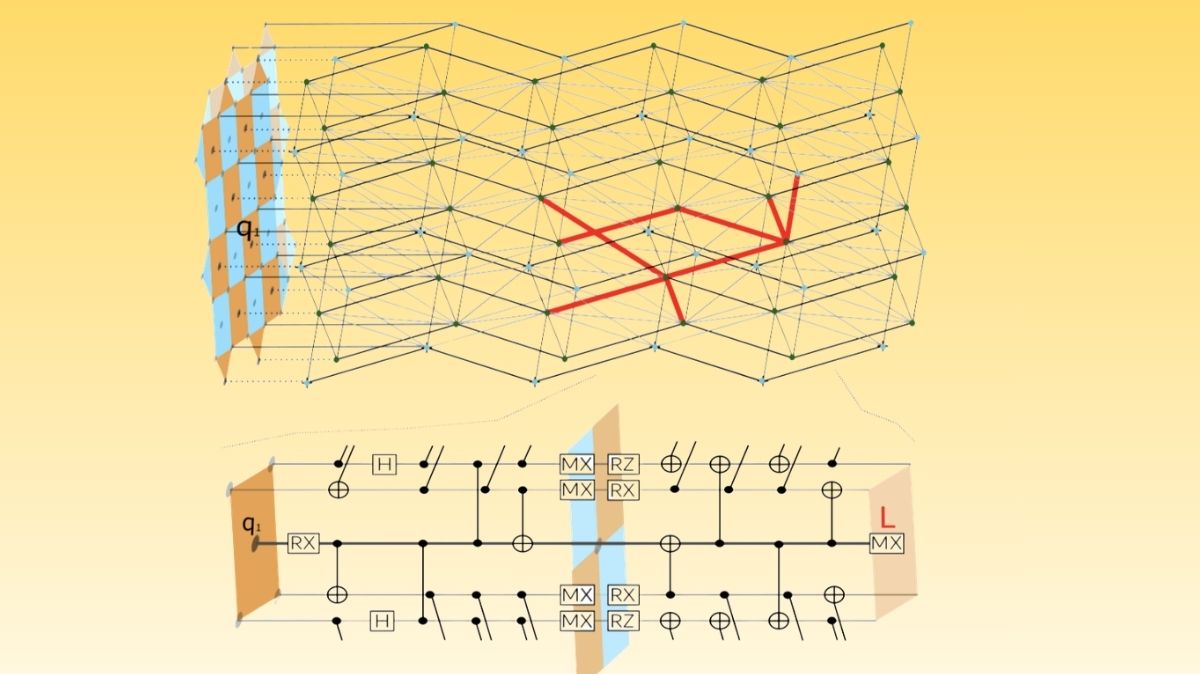

Quantum researchers are making major progress in Quantum Error Correction as the industry advances toward fault tolerance, the “holy grail” of quantum computing. One recent development is “concatenated symplectic double codes,” which combine several error-correcting code families (such the [] Iceberg code) to produce stable and manipulable logical qubits.

These algorithms make use of “SWAP-transversal” gates, which are practically free to build in software because of Quantinuum’s QCCD (Quantum Charge-Coupled Device) architecture’s all-to-all connection. By 2029, hundreds of logical qubits with a logical error rate of about 1×10−8 are the goal of Quantinuum’s roadmap.

A Worldwide Transition

The Fugaku-Reimei integration’s success offers HPC centers throughout the world a tangible model. The experiment demonstrates that hybrid compute is now an operational reality rather than just an ideal by showing that quantum devices may be integrated into the current research infrastructure to enhance potent classical systems.

These AI-assisted, GPU-accelerated procedures will scale to more realistic and industrially important challenges, such as cybersecurity and materials discovery, as technology continues to advance. Japan is sending a clear message to the world’s research community: integrated, hybrid discovery has begun, and the era of isolated quantum testing is coming to an end.

You can also read Quantum Alternating Operator Ansatz Unlocks Efficient Quantum

Thank you for your Interest in Quantum Computer. Please Reply