Quantum Hyperdimensional Computing: Brain-Inspired AI Takes Flight on a 156-Qubit Quantum Processor

The goal of advanced computation has long been characterized by the persistent difficulty of building a computer that accurately simulates the efficiency and operation of the human brain. A new paradigm called Quantum Hyperdimensional Computing (QHDC) has arisen that promises to close this crucial gap, even though many proposed quantum architectures have failed and frequently rely on resource-intensive adaptations of conventional algorithms that fall short of fully using quantum mechanics.

Researchers from top universities, such as Cleveland Clinic Research, Case Western Reserve University, and the University of Louisiana at Lafayette, developed this resource-efficient framework that directly maps the fundamental ideas of brain-inspired Hyperdimensional Computing (HDC) onto a quantum computer’s native operations.

Beyond abstract ideas, QHDC is a technology that can be proven to work. Significantly, the research team’s successful execution of a 156-qubit algorithm on a cutting-edge IBM Heron r3 quantum processor represents an important physical realization and establishes the groundwork for a new class of quantum neuromorphic algorithms.

You can also read Infleqtion Partners With ORNL For Quantum HPC Integration

The Quantum Leap in Neuromorphic Architectures

The next step towards reliable and resource-efficient artificial intelligence is neuromorphic computing, which attempts to create hardware based on neuro-biological principles. By pushing algorithms meant for classical bits to comply with the limitations of quantum mechanics, conventional attempts to integrate neuromorphic notions with quantum computing have frequently run into problems, leading to intricate circuit designs and significant resource requirements.

Because QHDC is inherently built for quantum phenomena, it completely avoids this problem. Under the direction of researchers like Bryan Raubenolt, Rui-Hao Li, and Fabio Cumbo, the system was designed so that the fundamental components of the brain-inspired model naturally correspond with the functions that qubits are best at.

The resulting QHDC framework enables effective quantum state manipulation, averaging procedures, and phase transformations to accomplish fundamental computational tasks including storing, combining, and comparing data representations. What sets QHDC apart as a serious candidate for useful quantum applications is its intrinsic resource-efficient mapping.

Decoding Hyperdimensional Computing (HDC)

Understanding Hyperdimensional Computing (HDC), the quantum innovation’s classical inspiration, is necessary before appreciating it. HDC is a brain-inspired model that uses extraordinarily large, high-dimensional vectors, frequently consisting of thousands of components. It is also referred to as Vector Symbolic Architecture (VSA). Usually pseudo-random, nearly orthogonal binary or real-valued vectors, these are called hypervectors.

Three essential characteristics of HDC compact data representation, energy efficiency, and inherent robustness are responsible for its effectiveness. An HDC system is naturally immune to noise and local failures because of its large dimensionality; a few corrupted elements inside a 10,000-dimensional vector hardly alter its overall meaning. HDC is particularly well suited for edge computing and resource-constrained devices where low latency and energy efficiency are essential because of its distributed nature, which offers robustness.

Items such as words, features, or pixels are encoded in a standard HDC system by allocating them to distinct hypervectors. These vectors are then combined utilizing straightforward, highly parallelizable techniques to carry out computational tasks:

- Bundling: Similar to OR in binary vectors or addition/averaging in real-valued vectors, bundling is used for memory storage, aggregation, or averaging.

- Binding: Used to link or associate data (similar to multiplication in real-valued vectors or XOR in binary vectors).

- Permutation: Data can be arranged or sequenced using permutation.

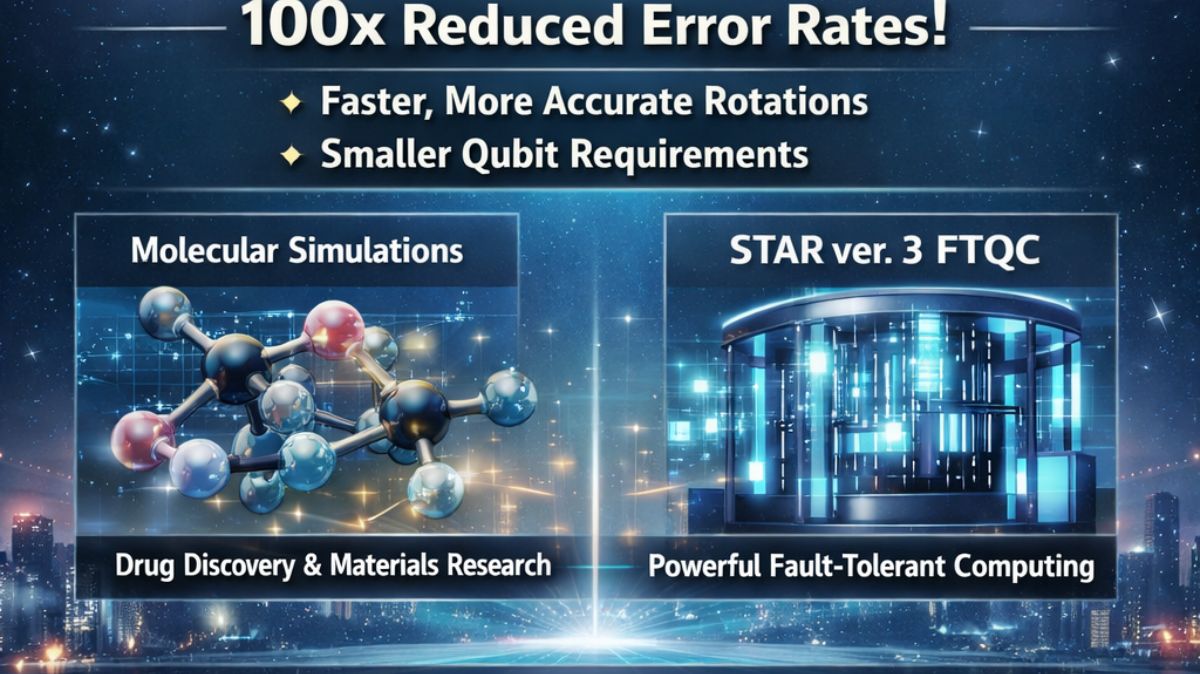

There are already many uses for conventional HDC, especially in the biomedical field. It has demonstrated great promise in bioinformatics and genomics, enabling effective tasks like genome sketching, DNA sequencing, and the quick analysis of large genomic information. By effectively detecting possible candidates and forecasting their characteristics, HDC is also speeding up the drug discovery process.

Additionally, HDC helps with the analysis of microbial profiles and the classification of cancer kinds based on complicated data, including DNA methylation patterns, in cancer research. Beyond genetics, HDC shows promise in predicting the toxicity of chemical substances and supports multi-modal data fusion for personalized treatment and combining data from numerous sensors. HDC has long been seen as a promising link to quantum systems of the future due to its efficiency and scalability.

You can also read IonQ Acquires Skyloom Global To Build Quantum Networking

Mapping the Brain to the Qubit

The fundamental breakthrough of QHDC is its native, direct mapping of HDC’s main functions onto quantum physics, which takes advantage of the special characteristics of quantum states rather than depending on traditional workarounds.

Hypervectors as Quantum States: The representation of high-dimensional hypervectors in QHDC as quantum states enables the compact encoding of a vast quantity of information in a comparatively small number of qubits.

- Bundling via Superposition: Quantum superposition naturally realizes the information-aggregating bundling function. Similar to how conventional bundling works, a superposition state naturally represents a combination or average of several options at once.

- Binding via Entanglement: Quantum entanglement naturally realizes the highly resource-intensive conventional binding process. A native and very effective way to connect the informational content of two quantum hypervectors is through entanglement, which characterizes basic non-classical connections between quantum particles.

Using common quantum circuits and advanced methods, the researchers were able to successfully execute all fundamental HDC operations, such as bundling, binding, permutation, and similarity measurement. These methods included the Hadamard Test, which effectively determines the similarity between two quantum states a crucial stage in HDC data analysis and pattern recognition and the Linear Combination of Unitarizes (LCU), which enables complex operations to be carried out by combining simpler quantum gates.

The team has effectively gone beyond simply modifying classical machine learning for quantum processors to developing a framework that is really quantum-native by developing a computational model that is naturally adapted to quantum hardware.

Validation on IBM Hardware

The IBM Heron r3 processor, a 156-qubit quantum machine, was used to rigorously develop and validate QHDC, proving its feasibility not only in theory but also in practice. This important step verified that, despite intrinsic noise and decoherence, the suggested quantum mappings are resilient in a real-world quantum environment.

To verify the framework’s adaptability, the research team successfully completed two separate experiments: a supervised classification challenge and a symbolic analogical reasoning assignment. The accomplishment of these tasks, along with comparisons with ideal quantum simulation and classical computation, solidified QHDC’s reputation as a reliable and promising technology by verifying its physical realizability and showcasing its ability to carry out abstract reasoning and pattern recognition on a large-scale quantum processor.

At the intersection of artificial intelligence, neurology, and quantum mechanics, the advent of Quantum Hyperdimensional Computing represents a turning point. The framework lays the foundation for a new era of resource-efficient quantum neuromorphic algorithms by establishing the architectural blueprint for the upcoming generation of advanced computation.

In order to fully utilize the capabilities of larger, more reliable quantum computers, future research will concentrate on expanding the framework. Whether used to construct highly adaptive AI systems, simulate the complicated principles of human cognition, or fuse advanced multi-omics data for fully personalized treatment, this technology ultimately promises to open up new ways to address challenging, real-world issues. QHDC is a significant step towards a time when computer systems will be powered by the laws of quantum physics and function not just on logic but also on the principles of the human brain.

You can also read Bivariate-Bicycle Codes boosted by IonQ Sparse Cyclic Layout

Thank you for your Interest in Quantum Computer. Please Reply