Inside the Quick Development of Quantum Processors: The Quantum Revolution Is Underway

Evolution of Quantum Processors

As a result of businesses increasing the number of qubits, enhancing coherence times, and testing completely new physical systems, the quantum processor, the foundation of quantum technology, is developing quickly. This fundamental hardware is ultimately what will determine how far quantum computing can advance. It is essential to comprehend the components and operation of these processors to assess the current state of the art and the future possibilities arising from quantum hardware.

You can also read Microsoft Launches 2026 QuPP Quantum Pioneers Program

Defining the Quantum Core: Qubits, Superposition, and Entanglement

A quantum processor is a type of specialized computer chip that processes data using the quantum mechanical paradigm. Qubits are units of information that can exist as 0, 1, or both at the same time through superposition, in contrast to classical bits, which store data as either 0 or 1. The fundamental unit that allows for large parallel processing is a qubit. Additionally, these units are capable of becoming entangled, which enables them to display coordinated behavior unmatched by classical systems.

With their ability to tackle intricate issues, including optimization problems, cryptography analysis, and molecular simulations, quantum processors promise to significantly improve computation speed.

Harnessing Qubits: How the Processor Works

In order to manipulate qubits, a quantum computer processor uses quantum gates, which alter the qubit’s state through regulated interactions like laser beams or electromagnetic pulses. In order to regulate and measure the qubits, the control systems, which are made up of electronics and microwave or laser systems, transfer digital instructions into quantum operations by sending precise signals.

In contrast to traditional logic gates, quantum gates use superposition to enable parallel processing by rotating qubit states in a multidimensional space. Because qubits are grouped in ways that promote entanglement, the processor is able to assess numerous options at once. Crucially, in order to protect qubits from thermal noise (decoherence) and maintain coherence, these operations must be carried out in settings with extremely low noise levels or close to absolute zero (millikelvin temperatures).

The processor measures the qubits once a quantum circuit is run. With probabilities based on the calculation, this process collapses the quantum state into definite values that reflect the output (classical 0s and 1s).

You can also read UChicago’s Localized Active Space for Materials Research

The Race for Scalability: Comparing Quantum Processor Types

Different architectures of quantum processors are available, each based on a distinct physical system:

Superconducting Qubits: These processors employ microwave pulses to manipulate tiny electrical circuits that are chilled to almost absolute zero. Hundreds to thousands of qubits can be placed on a chip their quick gate speeds and ease of scaling. Even though it needs a lot of quantum error correction because of noise and low coherence durations, this architecture is one of the most well-established and widely used.

Trapped Ions: Charged atoms suspended in electromagnetic fields and controlled by laser pulses are used to store qubits. The ions offer consistent performance and stability since they are the same by nature. Scaling is the main obstacle since it is hard to manage the intricate laser systems and control lengthy chains of ions.

Photonic Processors: These devices employ individual photons, which are light particles, as qubits. Photons can operate at ambient temperature. They naturally avoid numerous noise sources because they rarely interact with their surroundings. They are excellent in quantum networking and secure communication. However, producing and managing a large number of similar photons and obtaining strong interactions between them are limited.

Neutral Atom Systems: These devices use laser arrays known as optical tweezers to capture atoms, usually rubidium or cesium. They provide variable, changeable qubit architectures and robust two-qubit interactions. Because of their good scalability, making systems with hundreds of qubits, they are perfect for quantum optimization and simulation.

Topological Qubits: Currently at the experimental stage, this method seeks to make quantum information intrinsically noise-resistant by encoding it in the global shape of unusual quantum states. This method could greatly lower mistake rates and make error repair easier by dispersing data among “braided” quasiparticles, resulting in computers that are fault-tolerant and scalable.

You can also read IonQ & QuantumBasel Partnership With $60M Deal through 2029

Leading the Charge: Examples of Deployed and Experimental Processors

These many scientific underpinnings are the basis for platforms that several leading quantum computing companies are actively developing:

Google Willow (Superconducting): Google’s most recent generation of superconducting technology aims to achieve an error-corrected logical qubit by enhancing coherence time and gate fidelity. Willow improves error-correction performance by being uniformly and modularly optimized.

Rigetti Ankaa (Modular Superconducting): The goal of the Rigetti Ankaa (Modular Superconducting) family of chips is to combine several mid-sized chips into a bigger, scalable computer system by use of interposers and tunable couplers.

Xanadu Borealis/Osprey (Photonic): For applications such as Gaussian boson sampling, the Xanadu Borealis/Osprey (Photonic) processors control squeezed light pulses via optical interferometers. Borealis has a reputation for being openly available online.

PsiQuantum Q1 (Photonic): This platform uses CMOS fabrication to mass-produce optical components to build a one-million-qubit error-corrected quantum computer.

Microsoft Majorana-1 (Topological): With the integration of hybrid semiconductor-superconductor nanostructures meant to accommodate Majorana zero modes, Microsoft Majorana-1 (Topological) is an experimental research testbed created to investigate topological qubits.

You can also read MapLight Therapeutics News: Collaboration For CNS Therapies

The Path Forward: Modular Design and Specialized Accelerators

Classical processors (CPUs) and quantum processors are fundamentally different. Conventional systems perform well on general-purpose tasks by sequentially executing instructions on binary bits. By utilizing parallel computing, quantum processors are potent accelerators created for a limited yet revolutionary set of specialized challenges where classical scaling fails.

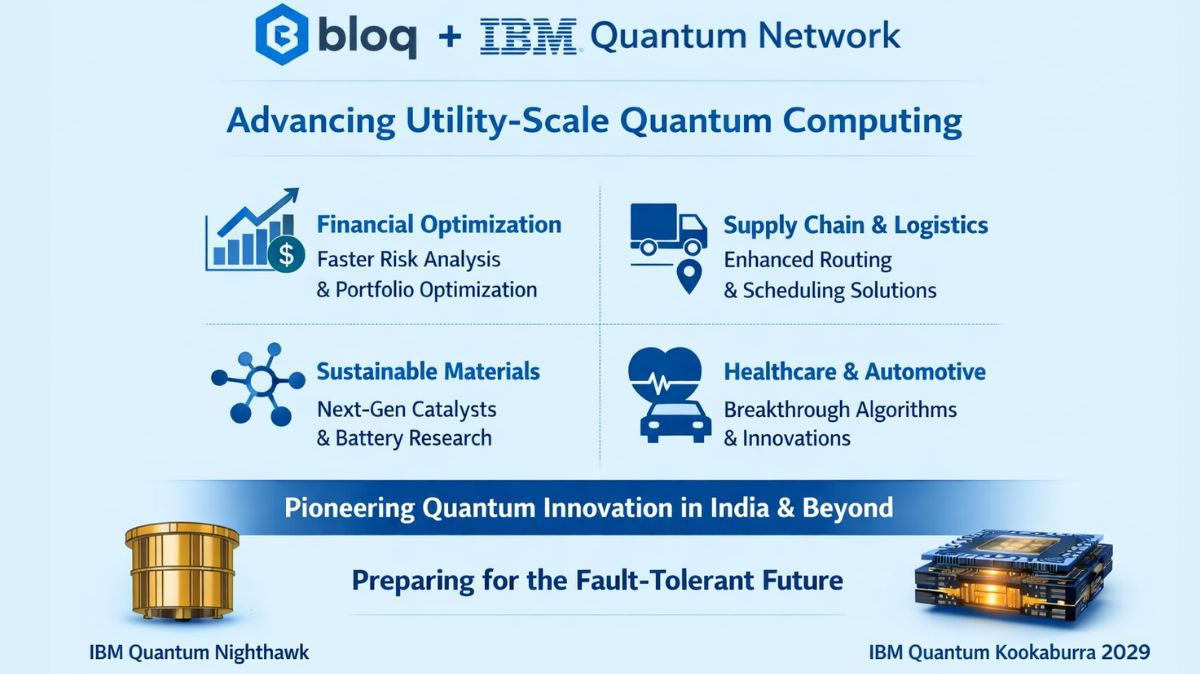

Three trends, stronger error correction, fully integrated hybrid systems, and modular architectures, are influencing the next generation of quantum processors. Companies are shifting away from chasing ever-larger monolithic chips and towards connecting smaller, high-fidelity units via microwave or photonic interconnects, a strategy that is similar to traditional supercomputing clusters.

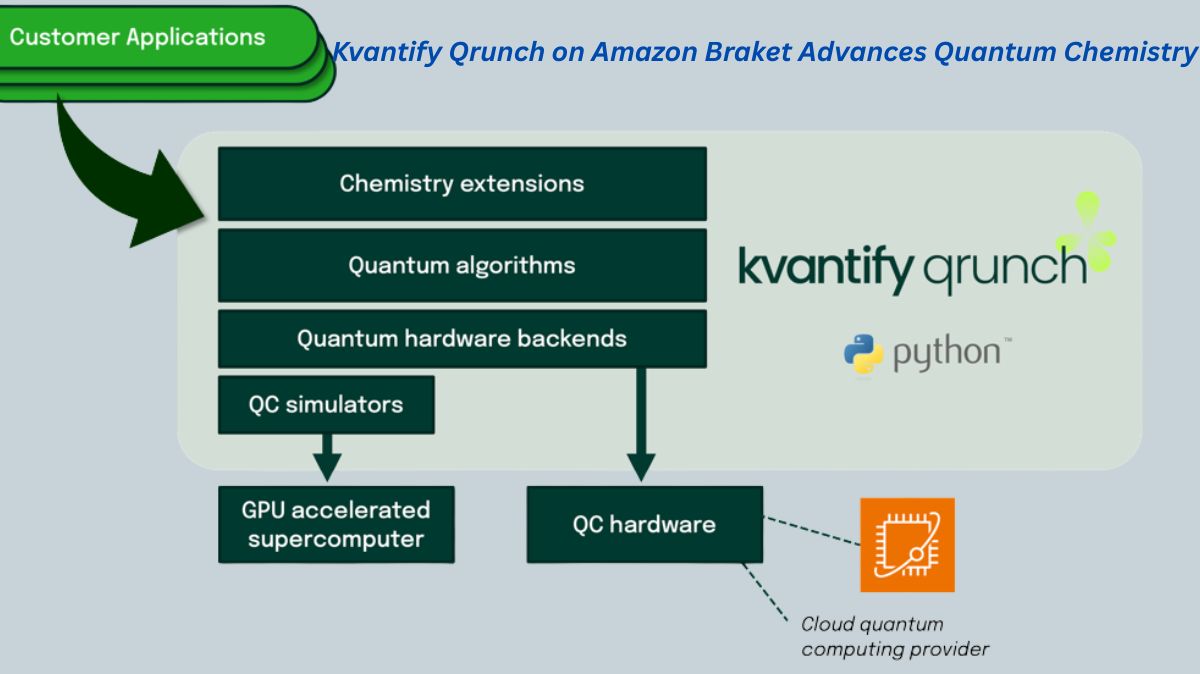

Another significant change is the appearance of application-specific quantum processors (AQPs). Scientists are creating chips for particular computing fields, such as chemistry-focused AQPs that are best suited for modelling electron interactions. The development of GPUs and TPUs in classical computing is comparable to this movement towards specialized tools.

As of right now, no single architecture has proven to be the best course of action. Rather, the area is moving forward with concurrent efforts that optimize error rates, scalability, and coherence in distinct ways. Quantum processors will progressively transition from experimental prototypes to specialized computing tools as these components advance.

You can also read Argonne Quantum 2025 Science and Technology Breakthroughs

Thank you for your Interest in Quantum Computer. Please Reply