In the current era of the “data explosion,” computer science’s greatest difficulty is organizing and deriving meaning from large, unstructured databases. The “holy grail” of artificial intelligence is effective quantum spectral clustering, which may be used for everything from spotting rare celestial bodies in the remote reaches of space to mapping the social ties of billions of people.

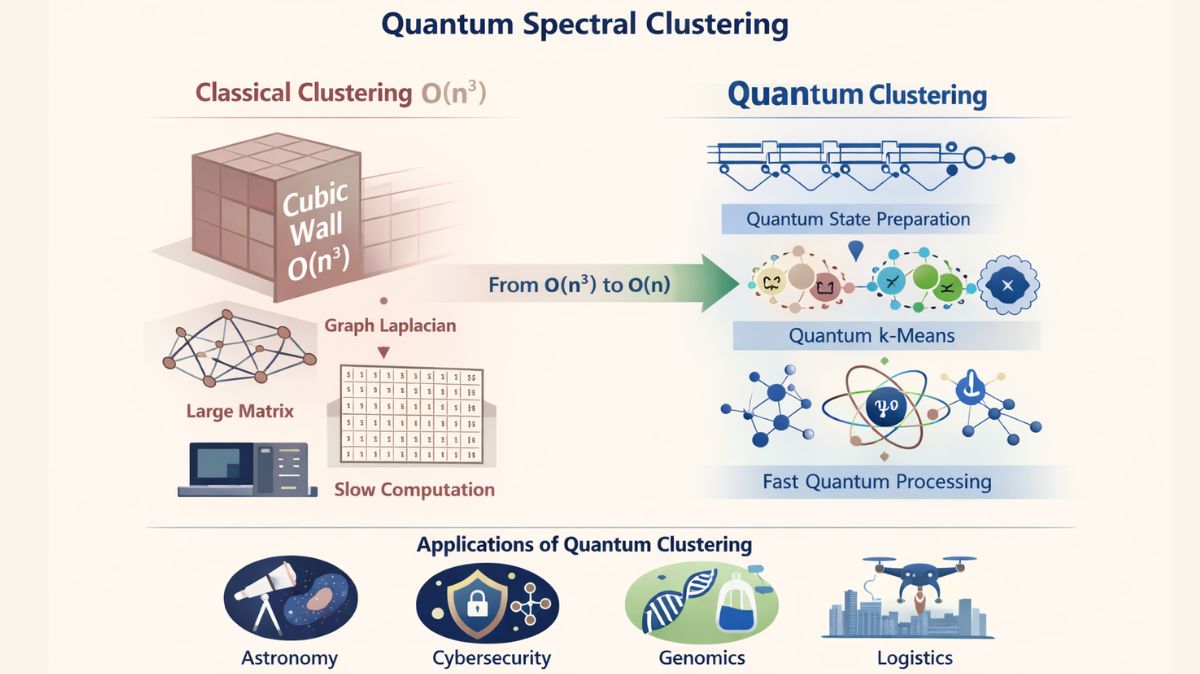

The “cubic wall” is an unbreakable mathematical obstacle that standard classical algorithms are encountering as datasets get larger. The principles of quantum mechanics may hold the key to overcoming this processing restriction, according to recent advances in quantum spectral clustering described in two seminal works.

You can also read Quandela Company and OVHcloud Promote European Quantum

The Limitation of Classical Clustering

The fundamental limits of the way we now handle information must be acknowledged to comprehend the scope of this accomplishment. The basic method of organizing data points so that items in one group are more similar to one another than those in other groups is called clustering. A more advanced form of this is spectral clustering, which views data points as “nodes” in a graph joined by “edges” that indicate similarity.

By studying the “spectrum” (eigenvalues and eigenvectors) of the Graph Laplacian matrix, researchers can project complex, non-linear data into a lower-dimensional space where it is easily separable. This is critical for datasets when k-means or other simpler methods fail to discover complex patterns. However, classical spectral clustering requires O(n3) operations for n points. This means that the amount of computational labor increases eightfold when a dataset is doubled in size. This cubic growth has emerged as a major data science constraint for the huge datasets of the 2020s.

You can also read VTU News Today To Launch quantum Lab at Bengaluru Campus

The Quantum Leap: From O(n3) to Near-Linear Complexity

“Quantum spectral clustering: Comparing classical and quantum algorithms for graph partitioning,” a recent paper published in Applied Physics Reviews, suggests a “end-to-end” quantum algorithm intended to demolish this cubic wall. The researchers show that the computational complexity can be decreased from cubic (n3) to almost linear (n) by utilizing superposition and entanglement.

The study claims that this quantum algorithm functions in two main stages:

- Quantum State Preparation: The approach creates a quantum representation of the projected Laplacian matrix by encoding data into the physical state of qubits via Hamiltonian simulation.

- Quantum k-means: After projecting the data into this “quantum space,” the method uses a quantum variant of k-means to determine the final clusters.

This method’s genius is in its ability to carry out the most difficult mathematical operations, such as identifying eigenvectors, without ever having to create the enormous, memory-intensive matrices that often cause classical supercomputers to falter.

You can also read SEALSQ Launch Quantum Vertical Stack During Q3 2026

Solving the “Mixed Graph” Mystery

The study also tackles mixed graphs, a recurring issue in data science. Relationships are rarely consistent in real life; a social network has “follows” (directed), “friends” (undirected), and “mutuals” (mixed). Because the resulting matrices are not “symmetric,” classical algorithms frequently encounter mathematical instability when dealing with directed graphs.

The quantum algorithm uses a bipartite maximally entangled beginning state to address these asymmetries. This makes it possible for researchers to find hidden characteristics and recurring patterns (motifs) in mixed graphs that were previously undetectable to classical machines.

You can also read Check Point Quantum Firewall For Global Digital Resilience

Avoiding the QRAM Hurdle

Quantum Random Access Memory (QRAM), a hardware component that does not yet exist in a practical form, is the foundation of several theoretical quantum machine learning (QML) concepts. This problem is circumvented by the approach described in this study, which combines Grover’s Search with Quantum Phase Estimation (QPE). Grover’s search optimizes the cluster selection, while phase estimation enables the computer to directly extract eigenvalues from the quantum state. This guarantees that the algorithm is “efficiently simulatable” on current hardware while yet being prepared for “quantum supremacy” in the future.

A New Frontier: Neuromorphic Quantum Kernels

While other academics concentrate on gate-based algorithms, “Quantum Kernels and Neuromorphic Neurons in quantum spectral clustering” delves into quantum neuromorphic computing. In this work, parameterized quantum kernels (pQK) and quantum leaky integrate-and-fire (QLIF) neurons employed as kernel generators are directly compared for the first time.

The pQK method in this framework optimizes features to reflect distances in the feature space by encoding them using trainable single-qubit rotations. In contrast, the neuromorphic technique uses population coding to convert classical input into “spike trains” that are subsequently processed by QLIF neurons using temporal distance metrics.

The study discovered that the pQK method worked better on higher-dimensional datasets, including those from the Sloan Digital Sky Survey (SDSS), while the neuromorphic QLIF kernel frequently produced superior results on synthetic and smaller datasets. This implies that certain quantum methods might be more appropriate for various kinds of data complexity.

You can also read Inspira Deploys Additively Manufactured Electronics System

Real-World Implications and the Road Ahead

Several industries will be significantly impacted by the switch from O(n3) to linear-time algorithms:

- Astronomy: To find patterns among astronomical objects like stars and galaxies in large datasets like the SDSS, unsupervised techniques are needed.

- Cybersecurity: Spotting botnet communities or anomalous network traffic patterns that indicate an attack.

- Genomics: Grouping gene expression information to find novel protein interactions or disease subtypes.

- Logistics: Segmenting metropolitan grids to maximize drone delivery or autonomous vehicle traffic.

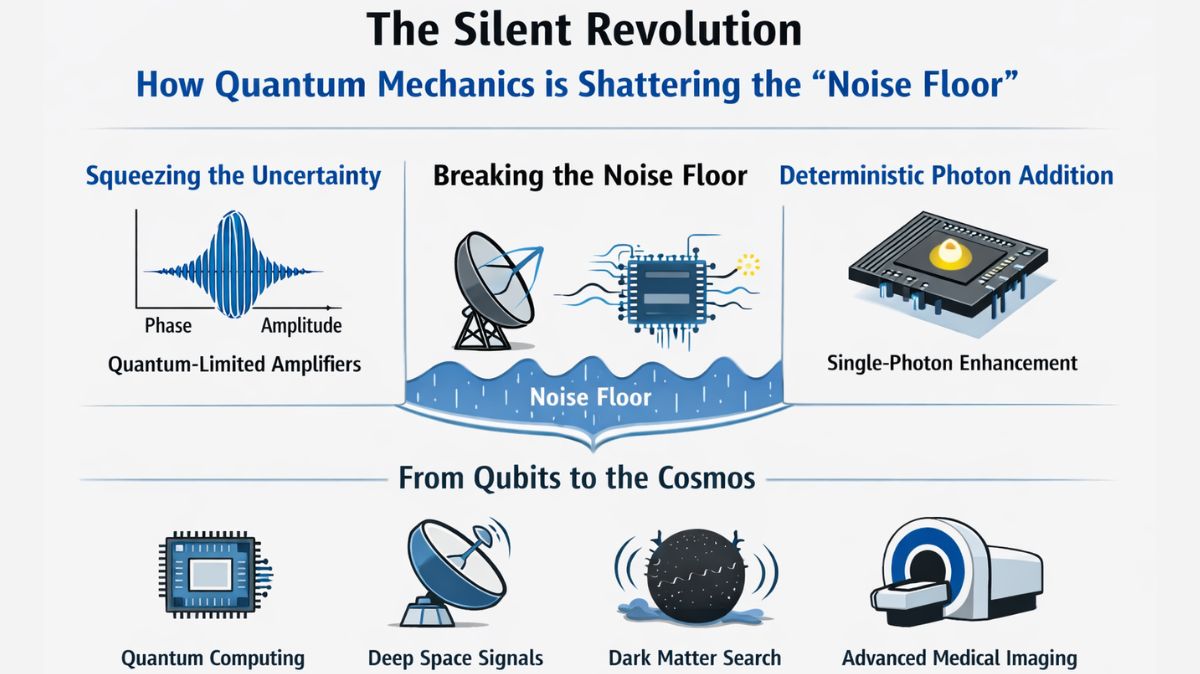

Researchers stay grounded despite the enormous possibilities. It live in the Noisy Intermediate-Scale Quantum (NISQ) era, where faults and environmental “noise” can affect hardware. Although the theoretical speedup is revolutionary, strong Quantum Error Correction (QEC) is still needed for practical implementation.

In conclusion

It is evident that theoretical “toy models” are giving way to functional algorithms that solve actual mathematical barriers in quantum machine learning. Quantum spectral clustering not only speeds up computers by converting the “cubic wall” into a linear path, but it also broadens “spectrum” of understanding the complicated world we live in. The patterns concealed in all most complicated data are finally starting to show as quantum hardware continues to grow.

You can also read PI Codes: New Backbone of Scalable Quantum Error Correction

Thank you for your Interest in Quantum Computer. Please Reply